Google is moving to deepen its partnership with Marvell to develop a new generation of chips specialized in inference, in a step aimed at reducing dependence on Nvidia and strengthening the cloud infrastructure's self-capabilities. This cooperation comes as the AI market shifts from training massive models to serving them globally.

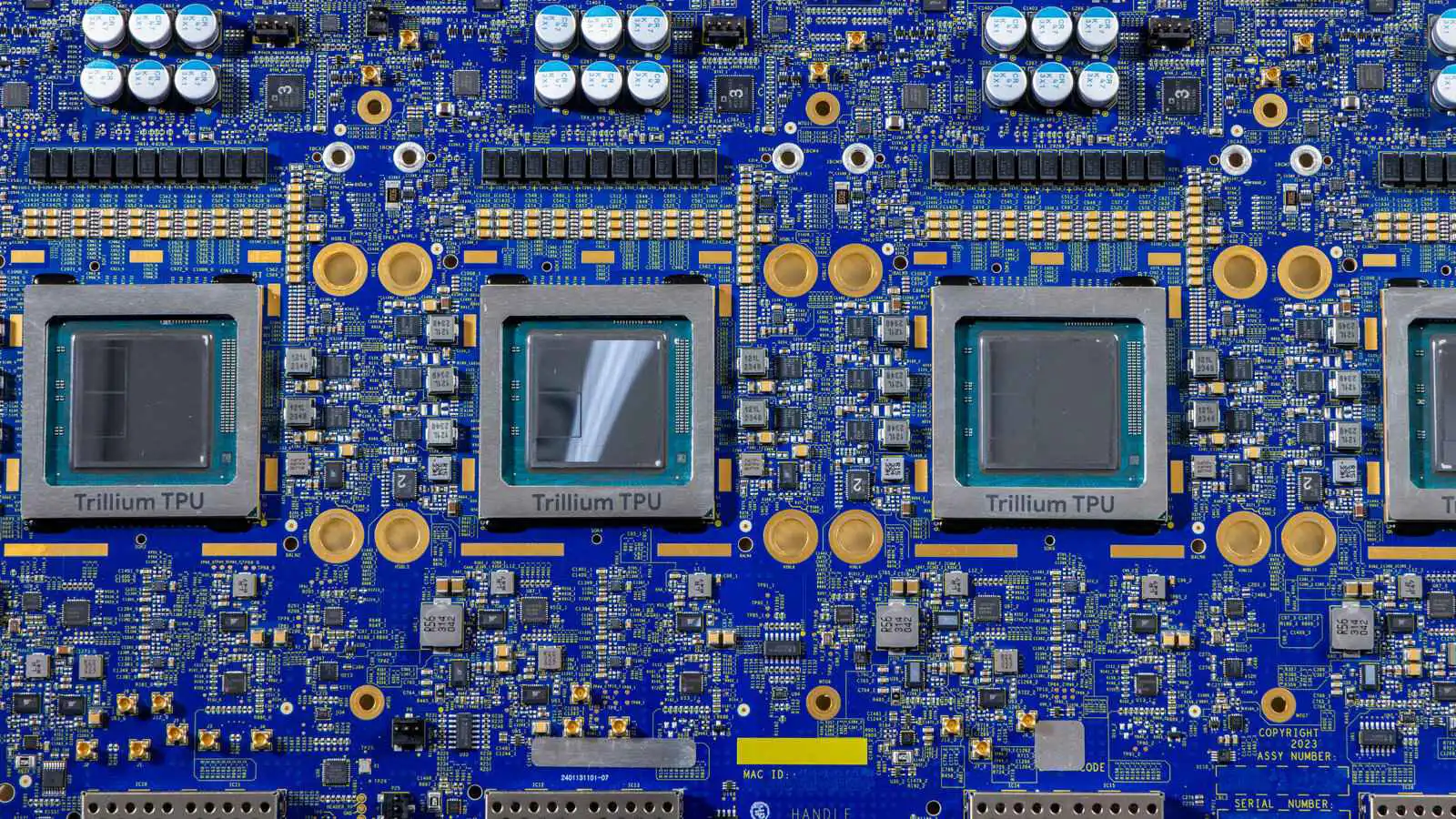

Available information indicates that Google seeks to develop diverse chips, including a memory processing unit that complements its current tensor processors, and a new optimized TPU for running large language models more efficiently. The inference market is considered one of the most strategically important areas currently, representing the largest operational cost for tech giants.

This alliance reflects the escalating competition among cloud service providers to control the entire AI value chain, from model development to running them on custom infrastructure. This cooperation is expected to create a shift in chip market dynamics, as major companies seek to build their own solutions rather than relying entirely on external suppliers.

admin

Editor at Dijlah Point News, writing about Technology.